Galaxy Morphology Classification with the Sloan Digital Sky Survey#

Overview#

Galaxy morphology, the shape and structure of a galaxy, is one of the most fundamental observables in extragalactic astronomy. The Hubble Sequence classifies galaxies into ellipticals (smooth, old stellar populations, passive), spirals (disk + arms, ongoing star formation), and irregulars (merger remnants). Understanding how morphology connects to physical properties like color, luminosity, and stellar mass is central to galaxy evolution, how galaxies form, grow, and quench over cosmic time.

Modern surveys like SDSS produce far more galaxies than can be classified by eye. Machine learning does this automatically and at far larger scale. In this project you will:

Explore galaxy photometric features from SDSS DR17.

Train supervised classifiers to predict morphology.

Build an Artificial Neural Network (ANN) classifier.

Use Bayesian inference and MCMC to fit the galaxy red sequence and quantify parameter uncertainties.

Data#

SDSS DR17: photometric and spectroscopic features

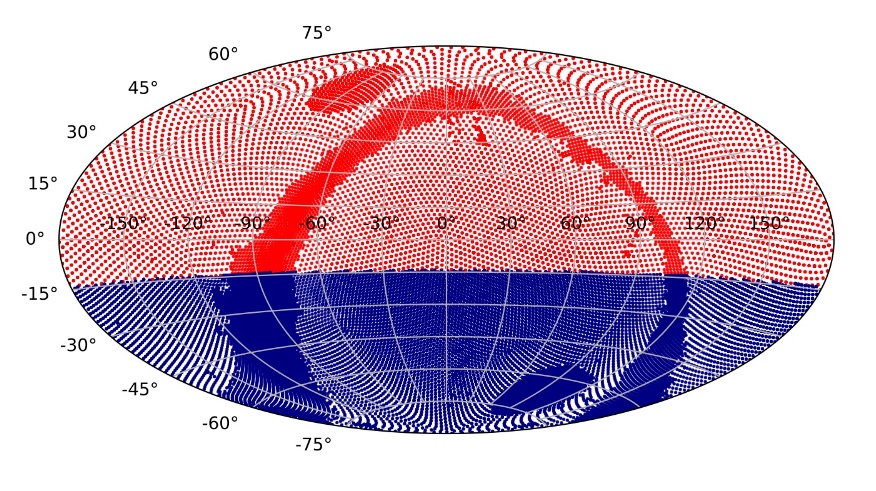

https://skyserver.sdss.org/casjobs/

To acquire the data:

SELECT TOP 10000

p.objid, p.modelMag_u, p.modelMag_g, p.modelMag_r, p.modelMag_i, p.modelMag_z,

p.petroR50_r, p.petroR90_r, p.petroMag_r, s.z AS redshift, s.velDisp

FROM PhotoObj AS p

JOIN SpecObj AS s ON s.bestobjid = p.objid

WHERE s.z BETWEEN 0.01 AND 0.25 AND s.zWarning = 0

AND p.petroMag_r BETWEEN 14 AND 18 AND p.type = 3

Setup#

%pip install scikit-learn tensorflow emcee corner seaborn

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

import warnings; warnings.filterwarnings('ignore')

import emcee, corner

from sklearn.model_selection import train_test_split, cross_val_score

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import classification_report, confusion_matrix, ConfusionMatrixDisplay

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers

sns.set_theme(style='whitegrid')

SEED = 42

np.random.seed(SEED); tf.random.set_seed(SEED)

%matplotlib inline

Questions#

Question 01#

What is the Hubble Sequence? Describe the main morphological classes (elliptical, lenticular, spiral, irregular) and the physical properties, stellar age, gas content, star formation rate, that distinguish them. What does the color–magnitude diagram of galaxies look like, and what physical processes drive the “red sequence” vs. “blue cloud” bimodality?

Answer by creating and populating cells below (markdown and/or code):

Accessing Data and Feature Engineering#

df_raw = pd.read_csv('phy450data.csv')

df_raw['morphology'] = pd.cut(

df_raw['fracDeV_r'],

bins=[-0.01, 0.3, 0.7, 1.01],

labels=['featured', 'ambiguous', 'smooth']

)

df_raw = df_raw[df_raw['morphology'].isin(['smooth', 'featured'])].copy()

df_raw['morphology'] = df_raw['morphology'].astype(str)

print(df_raw['morphology'].value_counts())

print(f"Total galaxies after labeling: {len(df_raw)}")

df_raw['color_ur'] = df_raw['modelMag_u'] - df_raw['modelMag_r']

df_raw['color_gr'] = df_raw['modelMag_g'] - df_raw['modelMag_r']

df_raw['concentration'] = df_raw['petroR90_r'] / df_raw['petroR50_r']

df_raw['abs_mag_r'] = df_raw['petroMag_r'] - 5*np.log10(df_raw['redshift']*3e5/70) - 25

FEATURES = ['color_ur', 'color_gr', 'concentration', 'petroR50_r', 'abs_mag_r', 'velDisp']

df = df_raw[FEATURES + ['morphology']].dropna()

df = df[df['concentration'].between(1, 5) & df['color_ur'].between(-1, 6)]

print(f"Final clean dataset: {len(df)} galaxies")

print(df['morphology'].value_counts())

Question 02#

For each feature, should smooth (elliptical) or featured (spiral) galaxies have higher values on average? Why? Do the plots confirm our expectations? Which single feature looks most discriminating between the two classes?

Answer by creating and populating cells below (markdown and/or code):

Supervised Classification#

X = df[FEATURES].values

y = (df['morphology'] == 'smooth').astype(int).values

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=SEED, stratify=y)

scaler = StandardScaler()

X_train_sc = scaler.fit_transform(X_train)

X_test_sc = scaler.transform(X_test)

lr = LogisticRegression(max_iter=500, random_state=SEED)

lr.fit(X_train_sc, y_train)

print(f"Logistic Regression 5-fold CV: "

f"{cross_val_score(lr, X_train_sc, y_train, cv=5).mean():.4f}")

rf = RandomForestClassifier(n_estimators=200, max_depth=10, random_state=SEED, n_jobs=-1)

rf.fit(X_train_sc, y_train)

print(f"Random Forest 5-fold CV: "

f"{cross_val_score(rf, X_train_sc, y_train, cv=5).mean():.4f}")

fig, axes = plt.subplots(1, 2, figsize=(11, 4))

for ax, model, name in zip(axes, [lr, rf], ['Logistic Regression', 'Random Forest']):

ConfusionMatrixDisplay(

confusion_matrix(y_test, model.predict(X_test_sc)),

display_labels=['Featured','Smooth']

).plot(ax=ax, colorbar=False, cmap='Blues')

ax.set_title(name)

plt.tight_layout(); plt.show()

Question 03#

Let’s compare the two baseline classifiers.

a) Looking at the classification_report for each model and compare precision, recall, and F1-score per class. Which model performs better overall?

b) Let’s plot the random forest feature importances. Which features are most discriminating? Does this match our prediction from Question 02?

c) Why is 5-fold cross-validation preferable to evaluating accuracy on a single test split?

Answer by creating and populating cells below (markdown and/or code):

Artificial Neural Network Classifier#

ann = keras.Sequential([

layers.Input(shape=(X_train_sc.shape[1],)),

layers.Dense(128, activation='relu'),

layers.BatchNormalization(),

layers.Dropout(0.3),

layers.Dense(64, activation='relu'),

layers.Dropout(0.3),

layers.Dense(1, activation='sigmoid')

])

ann.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy'])

ann.summary()

history = ann.fit(

X_train_sc, y_train,

validation_split=0.15, epochs=100, batch_size=64,

callbacks=[keras.callbacks.EarlyStopping(patience=10, restore_best_weights=True)],

verbose=1

)

fig, axes = plt.subplots(1, 2, figsize=(12, 4))

for ax, key, title in zip(axes, ['loss','accuracy'], ['Loss','Accuracy']):

ax.plot(history.history[key], label='Train')

ax.plot(history.history[f'val_{key}'], label='Validation')

ax.set_title(title); ax.set_xlabel('Epoch'); ax.legend()

plt.tight_layout(); plt.show()

y_prob = ann.predict(X_test_sc).flatten()

y_pred = (y_prob >= 0.5).astype(int)

print(classification_report(y_test, y_pred, target_names=['Featured','Smooth']))

Question 04#

Let’s analyze the ANN classifier.

a) How does the ANN’s test accuracy compare to the two baseline classifiers from Question 03?

b) We will look at the loss and accuracy curves. Is there overfitting? How does early stopping help, and what does restore_best_weights=True do?

c) Make a histogram of the ANN output probability y_prob, colored by true class.

Are many galaxies near the decision boundary P ≈ 0.5? What might these physically represent?

Bayesian Inference — The Galaxy Red Sequence#

Elliptical galaxies follow a tight red sequence: more luminous ellipticals are systematically redder. We model this linear relation with intrinsic scatter: $\( (u{-}r)_i = \alpha + \beta\, M_{r,i} + \epsilon_i, \qquad \epsilon_i \sim \mathcal{N}\!\left(0,\; \sigma_\mathrm{int}^2 + \sigma_\mathrm{obs}^2\right) \)\( and use `emcee` to sample the posterior over \)(\alpha,, \beta,, \sigma_\mathrm{int})$.

rs = df[(df['morphology']=='smooth') & df['color_ur'].between(1.5, 4.0)]

idx = np.random.default_rng(SEED).choice(len(rs), size=min(800, len(rs)), replace=False)

xd, yd, sig = rs['abs_mag_r'].values[idx], rs['color_ur'].values[idx], np.full(len(idx), 0.05)

def log_likelihood(theta, x, y, se):

a, b, lsig = theta

s = np.sqrt(np.exp(lsig)**2 + se**2)

return -0.5 * np.sum(((y - (a + b*x))/s)**2 + np.log(2*np.pi*s**2))

def log_prior(theta):

a, b, lsig = theta

return 0.0 if (-10<a<15 and -2<b<2 and -5<lsig<2) else -np.inf

def log_post(theta, x, y, se):

lp = log_prior(theta)

return lp + log_likelihood(theta, x, y, se) if np.isfinite(lp) else -np.inf

b0, a0 = np.polyfit(xd, yd, 1)

pos = np.array([a0, b0, np.log(0.1)]) + 1e-3*np.random.randn(32, 3)

sampler = emcee.EnsembleSampler(32, 3, log_post, args=(xd, yd, sig))

sampler.run_mcmc(pos, 2000, progress=True)

flat = sampler.get_chain(discard=300, thin=10, flat=True)

a_med, b_med, lsig_med = np.median(flat, axis=0)

print(f"alpha = {a_med:.3f} +/- {flat[:,0].std():.3f}")

print(f"beta = {b_med:.3f} +/- {flat[:,1].std():.3f}")

print(f"sigma_int = {np.exp(lsig_med):.3f} +/- {np.exp(flat[:,2]).std():.3f} mag")

sp = flat.copy(); sp[:,2] = np.exp(sp[:,2])

corner.corner(sp, labels=[r'$\alpha$', r'$\beta$', r'$\sigma_{\rm int}$'],

quantiles=[0.16, 0.5, 0.84], show_titles=True, color='steelblue')

plt.suptitle('Posterior: Red Sequence Parameters', y=1.01); plt.show()

xr = np.linspace(xd.min(), xd.max(), 100)

pred = flat[np.random.choice(len(flat),200), 0:1] + flat[np.random.choice(len(flat),200), 1:2] * xr

plt.figure(figsize=(8,5))

plt.scatter(xd, yd, s=5, alpha=0.3, color='#e63946', label='Elliptical galaxies')

plt.fill_between(xr, np.percentile(pred,16,0), np.percentile(pred,84,0),

alpha=0.4, color='navy', label=r'$1\sigma$ posterior')

plt.plot(xr, a_med + b_med*xr, color='navy', lw=2, label='Median fit')

plt.xlabel(r'$M_r$'); plt.ylabel('u − r'); plt.gca().invert_xaxis()

plt.title('Bayesian Fit: Red Sequence'); plt.legend(); plt.tight_layout(); plt.show()

Question 05#

Let’s interpret the Bayesian MCMC results.

a) What is the sign and physical meaning of the slope \(\beta\)? What does it imply about the stellar populations of massive elliptical galaxies that they are redder?

b) Are \(\alpha\) and \(\beta\) correlated in the corner plot? Why does a slope–intercept degeneracy arise in a linear fit.

c) The intrinsic scatter \(\sigma_\mathrm{int}\) captures real physical diversity among ellipticals beyond measurement noise. What other astrophysical processes could contribute to scatter in the red sequence?

Answer by creating and populating cells below (markdown and/or code):

Question 06#

a) Compare the three classifiers, and comment about their performance:

b) Bayesian vs. point estimates:** What does MCMC add that a simple least-squares fit cannot? Why does quantifying \(\sigma_\mathrm{int}\) and full parameter uncertainties matter scientifically?

c) Explore any limitations and extensions of this project:

References#

[1] Abdurrouf et al. 2022, ApJS 259, 35 SDSS DR17: https://arxiv.org/abs/2112.02026

[2] Foreman-Mackey et al. 2013, PASP 125, 306 emcee: https://arxiv.org/abs/1202.3665

[3] Conselice 2014, ARA&A 52, 291 Galaxy morphology review: https://arxiv.org/abs/1403.2783

[4] Galaxy Zoo 2 Kaggle dataset: https://www.kaggle.com/datasets/jaimetrickz/galaxy-zoo-2

[5] SDSS CasJobs SQL interface: https://skyserver.sdss.org/casjobs/

Acknowledgements#

Initial version: Ashi Poorey with some guidence from Mark Neubauer

SDSS DR17 data (CasJobs): photometric and spectroscopic features: DR17